One Database Per Service

I've had to work with engineers across different areas of microservices lately to help them operate more efficiently. Time and time again, I tell them the same thing: a microservice should be responsible for a single business capability, and the boundary should be clear.

Independent deployments require independent schemas. If two services share a data schema, they share a reason to change. You just coupled change at the data layer.

Fowler echoes this in his writing on microservices, stating that each service should manage its own database. He also highlights polyglot persistence, where an enterprise uses different data storage strategies based on the specific needs of each service. Clear component boundaries let you abstract the storage technology so the rest of the system can interact with data through contracts, rather than through table knowledge.

Chris Richardson makes the same point: services should keep persistent data private and only accessible through the service API, not by direct database access. A shared schema is an integration mechanism, and it is a very expensive one.

I've had a Post-it note on my monitor for the past few months to work with my team about data ownership, and how it should really be called "data sovereignty". That term connects with me because it forces the team to choose what data makes sense for them. For example, if I have a service that stores a list of states and countries, I don't really care about data related to inventory.

Using a Shared Database

"Alright, Larry. This sounds like a pain and like you're being dogmatic about microservices. I'm using a shared database anyway."

Okay. Let's play this out.

Initially, this is going to feel really good. It allows the system to behave like one tidy unit. Reports are easy because you can write a single SQL query joining across different domains. Referential integrity can exist across those domains. Good times.

But then comes the coordination tax.

Who owns the schema? Is it now your DBA team? What happens when one backlog item depends on a schema change that also impacts other services? What happens when Service A introduces a change that is perfectly reasonable for its domain, but breaks Service B because Service B was reading Service A tables through a join?

And it gets worse with long running processes.

If one service kicks off a long running process that holds locks in the shared database, another service can get blocked waiting on those same resources. Even if the services are deployed separately, they are now coupled at runtime through database contention.

I'm not even going to get into all the technical approaches and operational strategies for databases here. That could be a blog series on its own.

In this approach, you turned it into a distributed monolith. Great job, you split the application, but because you coupled the data, you coupled the release train. This is another way to get the worst of both worlds.

If your service should have a single reason to change, a shared schema produces many reasons to change for everyone involved.

Data Sovereignty

It is all about data sovereignty. When we say database per service, we mean the service is the sole owner of its data and schema. That service is the only actor allowed to change it. Sure, you can run multiple databases on the same physical server or managed platform, but the real constraint is ownership and access boundaries.

Changes to your data can have an autonomous lifecycle when each service owns that domain's data. When you define your domains well, this is usually straightforward. But if one service needs data from another service, you now need to evaluate how integrations behave. That is not always cut and dry.

Data Duplication

One of the first things I was passionate about early in my career was database normalization. I remember spending a ton of time trying to get to some normal form to reduce redundancy, plus leaning heavily on referential integrity. Nowadays, I would struggle to define what is and is not part of Second Normal Form from memory. Many mid level engineers have never even heard of normalization.

But in a microservices world, where each service owns its data, the overall system will have duplication. It is the nature of the beast. Often, that duplication is intentional and performance friendly. It can also support autonomy by allowing a service to answer questions without synchronously calling other services on every request.

It took me a while to accept this, because I had "data duplication is wrong" burned into my brain. In distributed systems, the real issue is often coupling, not duplication.

We see eventual consistency all the time in large systems and do not even think about it. Ever unsubscribe from an email list and get a message saying it may take 48 hours to go into effect? In that system, there might be a settings service and a scheduled mail service. The point is not that the mail service checks the settings service every time it sends an email. Updates flow asynchronously, which leads to eventual consistency. How you implement that flow depends on your system.

Keep in mind that when you have independent services with independent data (even if some of it is duplicated), not everything is always in sync down to the millisecond. An event driven approach can give you cross service consistency without relying on distributed transactions.

ACID (atomic, consistent, isolated, durable) transactions generally do not exist across service boundaries. You can get ACID behavior inside a service and its own database. The moment you cross into another domain and another data store, there is no guarantee that everything stays perfectly consistent at the same instant. You design for convergence over time.

CQRS helps, but it is not that simple

Every application eventually needs something like a dashboard that cuts across multiple domains. You do not want a UI making five network calls and stitching together a view, and you also cannot do a SQL join across service boundaries because you are not allowed to reach into another service's data store. The data inside a service is private and only accessible through its API.

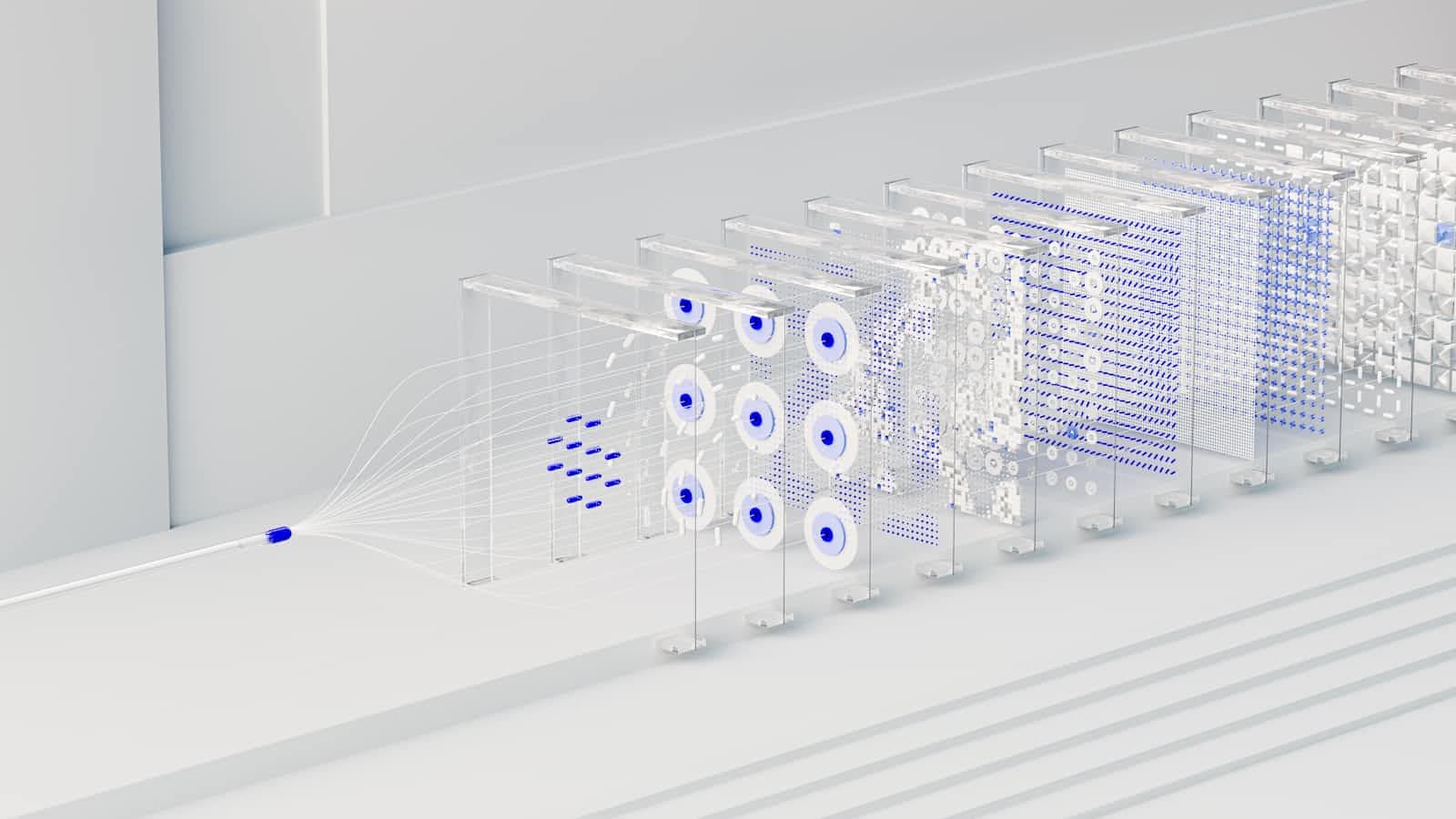

CQRS (Command Query Responsibility Segregation) can help.

Fowler describes CQRS as separating models for reads and writes. In practice, this often means your write model stays focused on enforcing business rules within a service boundary, while a separate read model is optimized for querying.

That read model is frequently built from events published by multiple services. You consume those events and build a projection that is already shaped like the query you want to run. Then your query path reads from that projection instead of trying to query the domain model directly.

Think of it as building a purpose built read model and querying that, rather than querying the domain write model directly. It can be extremely useful, but it introduces more moving parts: event publication, event consumption, projection rebuilds, and debugging eventual consistency issues.

CQRS can be a topic on its own, including when to use it, when not to use it, and how to keep it from turning into accidental complexity.

How to do this

As I said earlier, polyglot persistence in microservices means I do not really care what technologies you choose. You should do what is right for your project based on requirements and team capability.

With the maturity of code first approaches to database design and the availability of schema less databases, your schema can evolve with your application instead of requiring tightly coordinated database changes at the exact moment you need them.

I'm still big on referential integrity and I love relational databases. But I also recognize that I do not always know what my schema will look like on a project. On personal projects especially, I often ingest data I did not fully anticipate. In those cases, I tend to lean toward schema less storage when I am experimenting and learning.

For CQRS read models, document databases can also be a great fit because denormalization is the point. You can evolve the read model over time without constantly fighting relational shape changes in a way that introduces unnecessary coupling.

Bringing it back to SRP for microservices

This comes back to SOLID and SRP for microservices. Once you see it, it should feel obvious why each service needs its own schema.

Service boundaries allow a clean separation of concerns across responsibilities and data ownership. Your API enables interaction with that data while keeping responsibility within the owning application.

If your data is shared in the same database, it is not a real boundary. It is a facade with separate deployment units that still share the same change surface area, and it will become a mess. Stop bringing a mess into your codebase.

You want loose coupling. This is deliberate data ownership. This keeps teams and systems honest.

I get it. If you're experimenting in proof of concept stages, a shared database might feel like the fastest path. I've just seen too many proof of concepts make their way to production, never get cleaned up, and then nobody wants to pay to fix it because "it works".